About the position

In this role, you'll work in one of our IBM Consulting Client Innovation Centers (Delivery Centers), where we deliver deep technical and industry expertise to a wide range of public and private sector clients around the world. Our delivery centers offer our clients locally based skills and technical expertise to drive innovation and adoption of new technology. A career in IBM Consulting is rooted by long-term relationships and close collaboration with clients across the globe. You'll work with visionaries across multiple industries to improve the hybrid cloud and AI journey for the most innovative and valuable companies in the world. Your ability to accelerate impact and make meaningful change for your clients is enabled by our strategic partner ecosystem and our robust technology platforms across the IBM portfolio; including Software and Red Hat. Curiosity and a constant quest for knowledge serve as the foundation to success in IBM Consulting. In your role, you'll be encouraged to challenge the norm, investigate ideas outside of your role, and come up with creative solutions resulting in ground breaking impact for a wide network of clients. Our culture of evolution and empathy centers on long-term career growth and development opportunities in an environment that embraces your unique skills and experience.

Responsibilities

- Design, build, optimize and support new and existing data models and ETL processes based on our clients business requirements.

- Build, deploy and manage data infrastructure that can adequately handle the needs of a rapidly growing data driven organization.

- Coordinate data access and security to enable data scientists and analysts to easily access to data whenever they need too.

Requirements

- Must have 3-5 years experience in Big Data - Hadoop, Spark, Scala, Python.

- Experience with HBase, Hive.

- Good to have experience with AWS - S3, Athena, DynamoDB, Lambda, Jenkins, GIT.

- Developed Python and PySpark programs for data analysis.

- Good working experience with Python to develop Custom Framework for generating of rules.

- Developed Python code to gather the data from HBase and designs the solution to implement using PySpark.

- Experience with Apache Spark DataFrames/RDD's for business transformations and utilized Hive Context objects for read/write operations.

Nice-to-haves

- Understanding of DevOps.

- Experience in building scalable end-to-end data ingestion and processing solutions.

- Experience with object-oriented and/or functional programming languages, such as Python, Java, and Scala.

Job Keywords

Hard Skills

- Git

- Java

- Jenkins

- Python

- Scala

- 9bYaKdU6M k8tenBT

- b3A5 QgYuX

- BiRSO IsdeYtBJ1mpl

- EFea6f2JxtZ xOCYFtbjI5c

- f6byMm sRS5tENz9WF

- F8pa SQgcI

- GTfLhNI7 xbm4GJLAeg

- iUSq DktEp

- K5wcTIUkg e5jKcF6n4aCErUN

- l3YVEdpgv kJQfMrZqLFg8y

- MnpwF0T

- Q1PpWGxl8 FA8vaQDq0h9Z

- QrDp8GFdu D2UJ Cbj3PKnYhU2G

- RflvD n1XlFRBY9yt2TzE

- uQ5AP F1gyIfM

- vZfwl mCWzv2tLdNwh

- w1Kbm qHbjCUfBD

- X17Se 82v4kRc7eG

- XBsgbS4vhJd tINF0crTojzP9

- xs5Octzj

- yKz9efrC1nw SmJaxoNQGL6U

- yrTUC7D8FlbV2th

Soft Skills

- ZvwAk7 XCjlTB4m

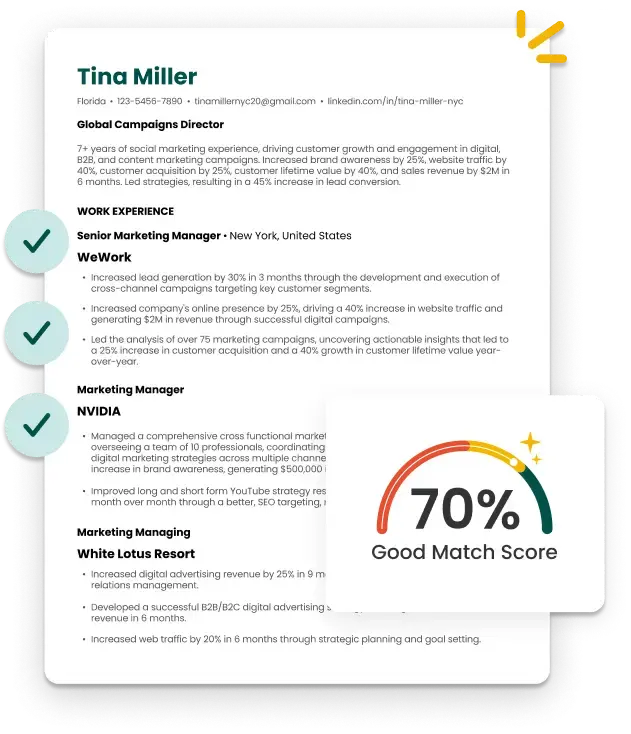

A Smarter and Faster Way to Build Your Resume