This job is closed

We regret to inform you that the job you were interested in has been closed. Although this specific position is no longer available, we encourage you to continue exploring other opportunities on our job board.

About the position

User Protection is an organization dedicated to protecting Google's users from abuse, account compromise and other harms online. Our team works with the Content Safety Platform (CSP) pillar, which develops tools to protect users from abusive content at scale - often leveraging AI technology to do so. Our team provides data science capabilities to Content Safety Platform, and works directly with product and engineering to evaluate, understand, and improve the quality of our protections. Organizationally, we are a part of a large data science team in Core, which provides ample opportunities for knowledge sharing, development, and learning from other data scientists working in adjacent domains. Content Safety Platform equips Google products with tools to protect users from abuse and harm. As a Data Scientist working with CSP, you'll be helping to evaluate, understand, and improve our abuse protections - which are generally built with and for AI tools. CSP Data Scientists work closely with cross-functional product teams on specific content safety classifiers, but also on generic strategies and tooling for understanding content safety classifiers. Product safety is critical to the success of nearly all of Google's products - especially novel AI tools.

Responsibilities

- Collaborate with stakeholders in cross-projects and team settings to identify and clarify business or product questions to answer. Provide feedback to translate and refine business questions into tractable analysis, evaluation metrics, or mathematical models.

- Use custom data infrastructure or existing data models as appropriate, using specialized knowledge. Design and evaluate models to mathematically express and solve defined problems with limited precedent.

- Gather information, business goals, priorities, and organizational context around the questions to answer, as well as the existing and upcoming data infrastructure.

- Own the process of gathering, extracting, and compiling data across sources via relevant tools (e.g., SQL, R, Python). Format, re-structure, and/or validate data to ensure quality, and review the dataset to ensure it is ready for analysis.

Requirements

- Master's degree in Statistics, Mathematics, Physics, Economics, Operations Research, Engineering, or a related quantitative field.

- Experience in Artificial Intelligence or Machine Learning.

- Experience coding in Python.

Nice-to-haves

- 2 years of work experience using analytics to solve product or business problems, coding (e.g., Python, R, SQL), querying databases or statistical analysis.

- Familiarity with approaches to evaluate the performance of machine learning classifiers and familiarity with explainable AI techniques.

- Ability to translate the business objectives in a content classification problem to an evaluation methodology.

Benefits

- The US base salary range for this full-time position is $118,000-$170,000 + bonus + equity + benefits.

- Salary ranges are determined by role, level, and location.

- Individual pay is determined by work location and additional factors, including job-related skills, experience, and relevant education or training.

Job Keywords

Hard Skills

- Database Queries

- Google Tools

- Python

- R

- SQL

- 2EXPAUxmz 52LhTNQHOew

- 2S5Uwt9xOWf eRVKU0DTxFBmJ

- 9INuE Kms9I1xhfJQ6

- 9ZCL7 tRK5MJSB

- avXHm XN5W8Mu4

- Fjg4bYoH30fP 5D3BU6Cmc

- kPpq3daygcm gdvDeapUA

- LoNcqbylGH2BS c20sRr6gx

- M4j3b 5WePO7sjGCw

- mFBd1 YiPMoxsWH

- n89erYqikZUgf EjuDpGRHy

- oWcYQlqRbMC69 hx1AwgIX

- p6mEWDaG RbSGLXZv5

- pYPrD lqCAIV9hiBFcMgU

- qtpPaWrMG7g5 uTE7pCFkML

- QzgksLeir jpbmz09xLr

- r1paISNLOe3z Afo

- RvgXYIBLej6f Oonmq0iTR

- tawG1 8gcPUfeFlyjabAn

- tB4YRquC sQPUjZJbC1Wp

- wFMdN6T4EqSiP Ksfd8ryeN

- z85o3n6Tlfs 7Bg4czbvP6dQ

- ZunjAK9MT 7a0FR8C9z

- ZvrShJQiK Oud9BcpLynZG

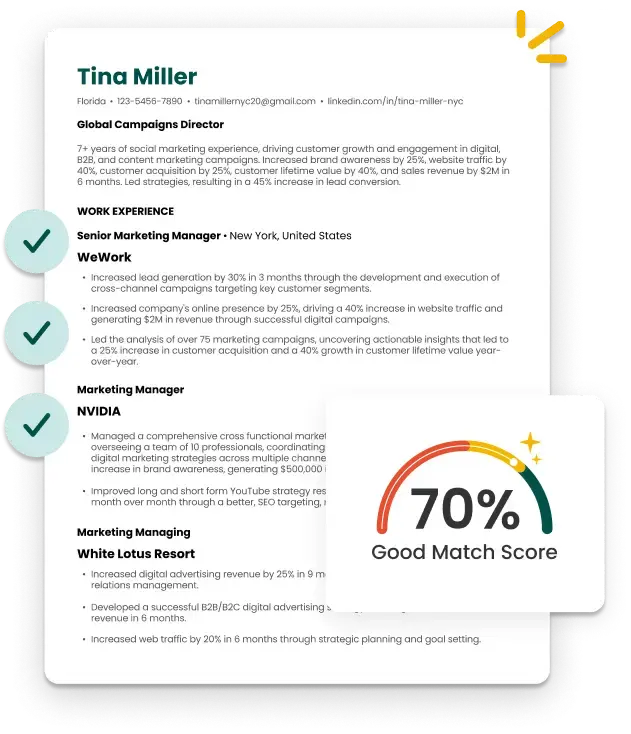

A Smarter and Faster Way to Build Your Resume